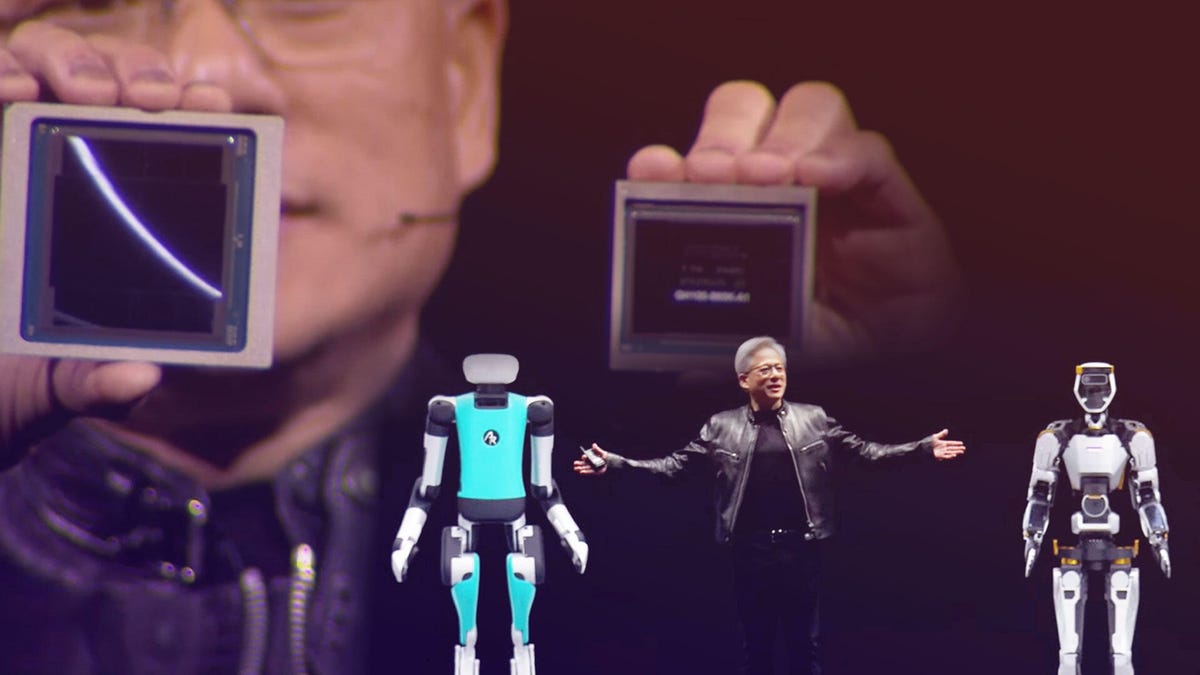

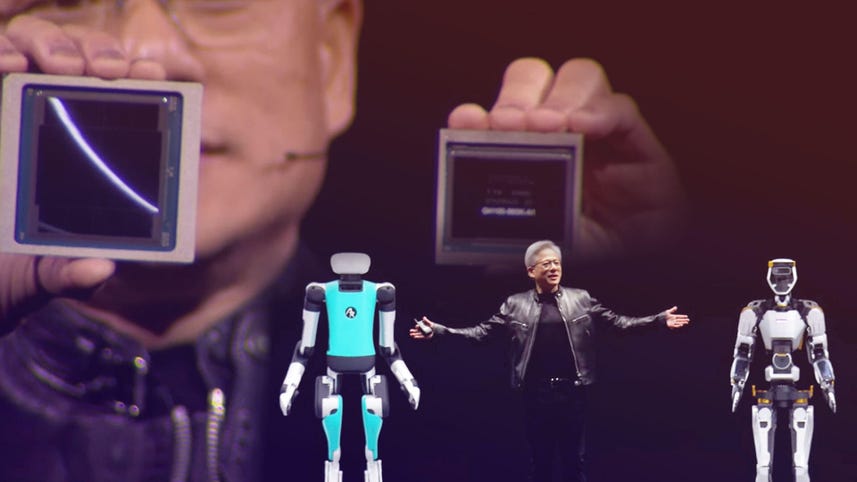

Speaker 1: I hope you realize this is not a concert. You have arrived at a developer’s conference. There will be a lot of science described algorithms, computer architecture, mathematics. Blackwell is not a chip. Blackwell is the name of a platform. People think we make GPUs and we do, [00:00:30] but GPUs don’t look the way they used to. This is Hopper. Hopper changed the world. This is Blackwell. It’s okay. Hopper

Speaker 1: 208 billion transistors. And so you could see, I can see that there’s [00:01:00] a small line between two dyes. This is the first time two dyes have a button like this together in such a way that the two dyes think it’s one chip. There’s 10 terabytes of data between it, 10 terabytes per second so that these two sides of the Blackwell chip have no clue which side they’re on. There’s no memory locality issues, no cache issues. It’s just one giant chip and it goes into two types of systems. [00:01:30] The first one, it’s form fit function compatible to hopper, and so you slide all hopper and you push in Blackwell. That’s the reason why one of the challenges of ramping is going to be so efficient. There are installations of hoppers all over the world and they could be the same infrastructure, same design, the power, the electricity, the thermals, the software identical, push it right back. And so this is a hopper version [00:02:00] for the current HGX configuration and this is what the second hopper looks like this. Now this is a prototype board. This is a fully functioning board and I’ll just be careful here. This right here is, I don’t know, $10 billion.

Speaker 1: The second one’s five.

Speaker 1: It gets cheaper after that. So any customers in [00:02:30] the audience? It’s okay. The gray CPU has a super fast chip to chip link. What’s amazing is this computer is the first of its kind where this much computation, first of all fits into this small of a place. Second, it’s memory coherent. They feel like they’re just one big happy family working on one application together. We created a processor for the generative AI era and one of the most important [00:03:00] parts of it is content token generation. We call it. This format is FP four. The rate at which we’re advancing computing is insane and it’s still not fast enough, so we built another chip. This chip is just an incredible chip. We call it the ENV link switch. It’s 50 billion transistors. It’s almost the size of hopper all by itself. This switch up has four ENV links in it, each 1.8 [00:03:30] terabytes per second, and it has computation in it, as I mentioned. What is this chip for? If we were to build such a chip, we can have every single GPU talk to every other GPU at full speed at the same time. You can build a system that looks like this.

Speaker 1: [00:04:00] Now, this system, this system is kind of insane. This is 1D GX. This is what A DGX looks like. Now, just so you know, there are only a couple, two, three exit flops machines on the planet as we speak, and so this is an exit flops AI system in one single rack. I want to thank some partners that are joining us in this. AWS [00:04:30] is gearing up for Blackwell. They’re going to build the first GPU with secure ai. They’re building out a 222 exo flops system where Cuda accelerating SageMaker ai, where Cuda accelerating bedrock ai. Amazon Robotics is working with us using Nvidia Omniverse and Isaac Sim. AWS Health has Nvidia Health integrated into it. So AWS has really leaned into accelerated computing. Google is [00:05:00] gearing up for Blackwell. GCP already has a one hundreds, H one hundreds, T fours, L fours, a whole fleet of Nvidia Kuda GPUs, and they recently announced a Gemma model that runs across all of it.

Speaker 1: We’re working to optimize and accelerate every aspect of GCP. We’re accelerating data proc for data processing. Their data processing engine, JAKs XLA, vertex AI and mu joco for robotics. So we’re working with Google and GCP across a whole bunch [00:05:30] of initiatives. Oracle is gearing up for Blackwell. Oracle is a great partner of ours for Nvidia DGX Cloud, and we’re also working together to accelerate something that’s really important to a lot of companies. Oracle Database, Microsoft is accelerating and Microsoft is gearing up for Blackwell. Microsoft, Nvidia has a wide ranging partnership. We’re accelerating, could accelerating all kinds of services. When you chat obviously and AI services that are in Microsoft Azure, it’s very, very likely [00:06:00] NVIDIA’s in the back doing the inference and the token generation. They built the largest Nvidia InfiniBand supercomputer, basically a digital twin of ours or a physical twin of ours. We’re bringing the Nvidia ecosystem to Azure, Nvidia, DDRs Cloud to Azure.

Speaker 1: Nvidia Omniverse is now hosted in Azure. Nvidia healthcare is in Azure and all of it is deeply integrated and deeply connected with Microsoft Fabric. A nim, it’s a pre-trained model, so it’s pretty clever [00:06:30] and it is packaged and optimized to run across NVIDIA’s install base, which is very, very large. What’s incited is incredible. You have all these pre-trained state-of-the-art open source models. They could be open source, they could be from one of our partners. It could be created by us like Nvidia moment. It is packaged up with all of its dependencies. So Kuda, the right version, co DNN, the right version, tensor rt, LLM, distributing across the multiple GPUs, tri [00:07:00] and inference server, all completely packaged together. It’s optimized depending on whether you have a single GPU multi GPU or multi node of GPUs. It’s optimized for that and it’s connected up with APIs that are simple to use.

Speaker 1: These packages, incredible bodies of software will be optimized and packaged and we’ll put it on a website and you can download it, you can take it with you, you can run it in any cloud, you can run it in your own data center. You can [00:07:30] run in workstations if it fit. And all you have to do is come to ai.nvidia.com. We call it NVIDIA Inference microservice, but inside the company, we all call it nims. We have a service called NEMO microservice that helps you curate the data, preparing the data so that you could teach this onboard, this ai. You fine tune them and then you guardrail it. You can even evaluate the answer, evaluate its performance against other examples. And so we are effectively an AI [00:08:00] foundry we will do for you and the industry on ai, what TSMC does for us building chips. And so we go to it, go to TSMC with our big ideas.

Speaker 1: They manufacture it and we take it with us. And so exactly the same thing here. AI Foundry and the three pillars are the NIMS NEMO microservice and DGX Cloud. We’re announcing that Nvidia AI Foundry is working with some of the world’s great companies. SAP generates 87% of the world’s global commerce. [00:08:30] Basically the world runs on SAP, we run on SAP. Nvidia and SAP are building SAP, jewel Copilots using Nvidia Nemo and DGX Cloud ServiceNow. They run 80, 85% of the world’s Fortune 500 companies run their people and customer service operations on ServiceNow and they’re using Nvidia AI Foundry to build ServiceNow assist virtual assistance. Cohesity backs up the world’s data. They’re sitting on a gold mine [00:09:00] of data, hundreds of exabytes of data. Over 10,000 companies. Nvidia AI Foundry is working with them, helping them build their Gaia generative AI agent. Snowflake is a company that stores the world’s digital warehouse in the cloud and serves over 3 billion queries a day for 10,000 enterprise customers.

Speaker 1: Snowflake is working with Nvidia AI Foundry to build copilots with Nvidia Nemo [00:09:30] and NIMS NetApp. Nearly half of the files in the world are stored on-Prem on NetApp. Nvidia. AI Foundry is helping them build chatbots and copilots like those vector databases and retrievers with Nvidia Nemo and nims, and we have a great partnership with Dell, everybody who is building these chatbots and generative ai. When you’re ready to run it, you’re going to need an AI factory [00:10:00] and nobody is better at building end-to-end systems of very large scale for the enterprise than Dell. And so anybody, any company, every company will need to build AI factories. And it turns out that Michael is here. He’s happy to take your order. We need a simulation engine that represents the world digitally for the robot so that the robot has a gym to go learn how to be a robot. We call that [00:10:30] virtual world Omniverse, and the computer that runs Omniverse is called OVX and OVX. The computer itself is hosted in the Azure Cloud.

Speaker 2: The future of heavy industries starts as a digital twin. The AI agents helping robots, workers and infrastructure navigate unpredictable events in complex industrial spaces will be built and evaluated first in sophisticated digital twins.

Speaker 1: Once you connect everything together, it’s insane [00:11:00] how much productivity you can get and it’s just really, really wonderful. All of a sudden, everybody’s operating on the same ground truth. You don’t have to exchange data and convert data, make mistakes. Everybody is working on the same ground truth from the design department to the art departments, the architecture department, all the way to the engineering and even the marketing department. Today we’re announcing that omniverse Cloud streams to the Vision Pro, and [00:11:30] it is very, very strange that you walk around virtual doors when I was getting out of that car and everybody does it. It is really, really quite amazing Vision Pro connected to omniverse portals, you into Omniverse. And because all of these CAD tools and all these different design tools are now integrated and connected to omniverse, you can [00:12:00] have this type of workflow really incredible.

Speaker 3: This is Nvidia Project Group, a general purpose foundation model for humanoid robot learning. The group model takes multimodal instructions and past interactions as input and produces the next action for the robot to execute. We developed Isaac Lab, a robot learning application [00:12:30] to train grit on Omniverse Isaac Sim, and we scale out with Osmo, a new compute orchestration service that coordinates workflows across DGX systems for training and OVX systems for simulation. The GR model will enable a robot to learn from a handful of human demonstrations so it can help with everyday tasks and emulate human movement just by observing us all. This incredible intelligence [00:13:00] is powered by the new Jetson Thor robotics chips designed for gr, built for the future with Isaac Lab, Osmo and Groot. We’re providing the building blocks for the next generation of AI powered robotics,

Speaker 1: About [00:13:30] the same size, the soul of Nvidia, the intersection of computer graphics, physics, artificial intelligence. It all came to bear at this moment. The name of that project, general Robotics 0 0 3. I know. Super good, super [00:14:00] good. Well, I think we have some special guests, do we? Hey guys, I understand you guys are powered by Jetson. They’re powered by Jetsons, little Jetson Robotics computers inside. [00:14:30] They learned to walk in Isaac Sim. Ladies and gentlemen, this is Orange and this is the famous Green. They are the BDX robots of Disney. Amazing Disney research. Come on you guys. Let’s wrap up. Let’s go five things. Where are you going? [00:15:00] I sit right here. Don’t be afraid. Come here, green. Hurry up. What are you saying? No, it’s not time to eat. It’s not time to eat. I’ll give you a snack in a moment. Let me finish up real quick. Come on green. [00:15:30] Hurry up. Wasting time. This is what we announced to you today. This is Blackwell. This is the amazing, amazing processors, env, link switches, networking systems, and the system design is a miracle. This is Blackwell, and this to me is what a GPU looks like in my mind.